Say No To Gas Wars: The Technology Behind the Illuvium Land Sale

This article details the technical aspects of Illuvium's recently concluded Land Sale, focusing on the backend technology and approach. Warning, it's a long one. I've tried to put enough detail in here so that other teams looking to run a similar NFT sale can take away valuable information and ensure the success of their sale. Apologies to the casual reader!

Overview

Illuvium's first Land Sale, where we sold ~20,000 NFTs representing virtual plots of land in the Illuvium homeworld, was a great success.

During the sale, which ran from June 2nd to June 6th of this year (2022), customers spent over USD 72 million of sILV2 and ETH purchasing plots of land, and the feedback was overwhelmingly positive. Gas costs per plot were typically in the $10–15 USD range, a far cry from the hundreds or thousands of dollars spent per NFT in other similar sales. And, as a cherry on top, very few customers lost gas due to failed transactions.

It would be remiss not to note that bear-market conditions played a role in this outcome, as did the community-led decision to use a Dutch Auction with starting prices set at a level where Dutch Auction mechanics can function. That said, there is no doubt that the technology used in the Illuvium Land Sale was critical to its success.

Our Approach to Technology and Technology Partners

Since our inception, Illuvium's philosophy has been to use best-of-breed solutions and always err on quality over speed. This applies to every aspect of our business, from our concept art to our cinematic trailers, from our IaC platform to our JavaScript code.

Of our partners, Immutable was, of course, the most important. Their Immutable X (IMX) platform enabled gas-free minting and provided the APIs we use to drive our own marketplace experience. Immutable was also instrumental in helping us develop the mixed L1/L2 solution that was so important to our success.

Behind the scenes, several other key technology partners played a critical role in our Land Sale, specifically:

- AWS — our primary cloud provider where that hosts all our backend infrastructure.

- Vercel — the Next.js service which hosted our Land Sale website.

- Alchemy — the blockchain infrastructure provider, used for all of our Layer 1 operations.

In terms of our own development, one of our most vital architectural principles is that we prefer serverless designs and technologies and use them wherever we can. You can read more on the rationale behind this approach here:

25. Illuvium's Serverless Architecture Will Be "Best-Of-Breed," Says Lead Server Engineer John…

The Land Sale architecture is no exception, and the only service we use that we don't consider a true serverless solution is Amazon OpenSearch. It is a fully managed service, our next best preference, but still exposes server-like concepts such as the type and number of nodes that make up the ElasticSearch cluster.

Land Sale Architecture

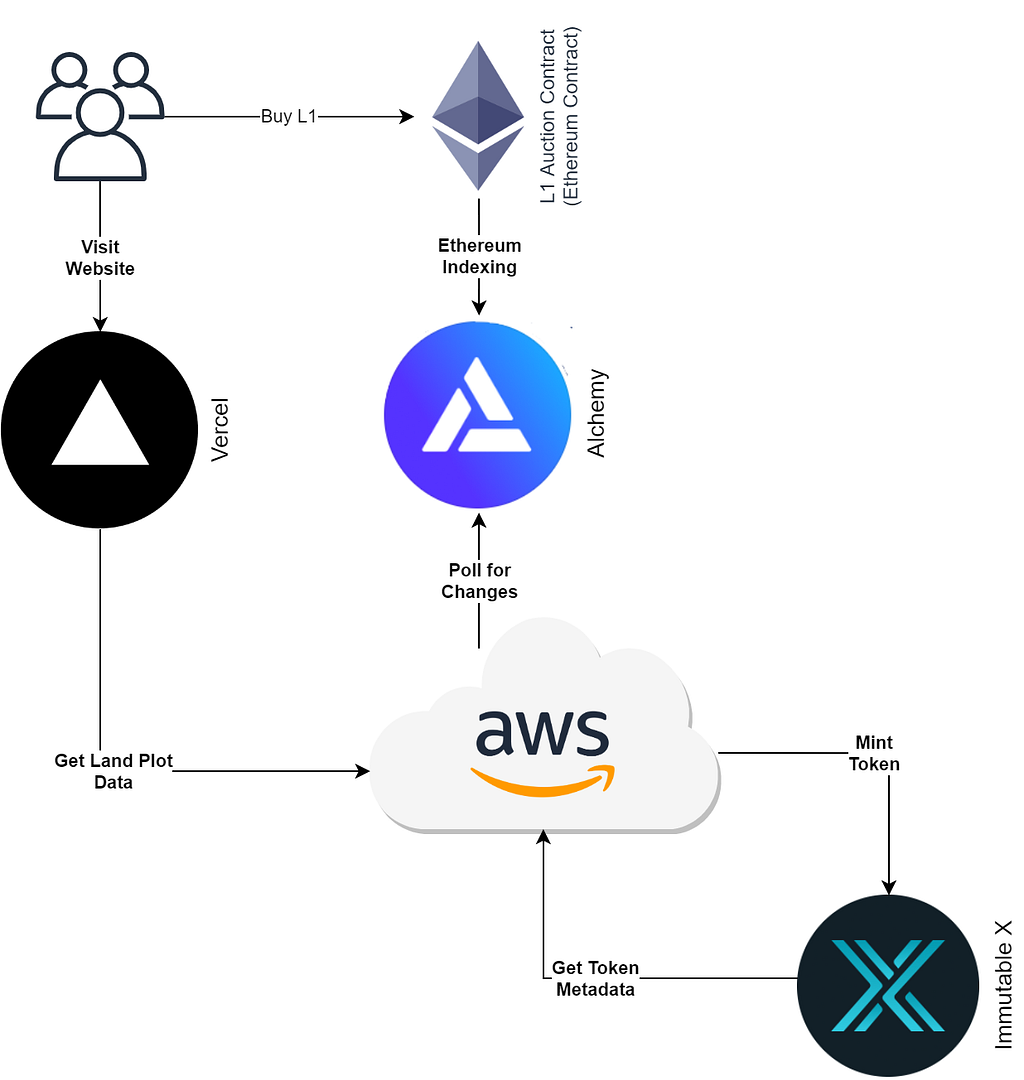

The original plan for the Illuvium Land Sale was to operate purely on L1. We knew we wanted to use a Dutch Auction quite early, and that wasn't a capability available on IMX yet. However, after discussion with Immutable, we decided on a hybrid L1/L2 approach. The auction would operate on L1, but minting would occur on L2.

The approach, at least conceptually, is quite simple: when the L1 purchase function completes, it emits an event to the blockchain indicating the purchase has been made. The event includes data about the purchase, such as Plot ID and Location. Our backend responds to these events by minting the corresponding token on L2 (IMX). We show this view in the figure below.

The '20,000 ft' view of the Land Sale Architecture

The '20,000 ft' view of the Land Sale Architecture

Plot Generation

A critical facet of the Land Sale was that the full plot details were unknown before purchase.

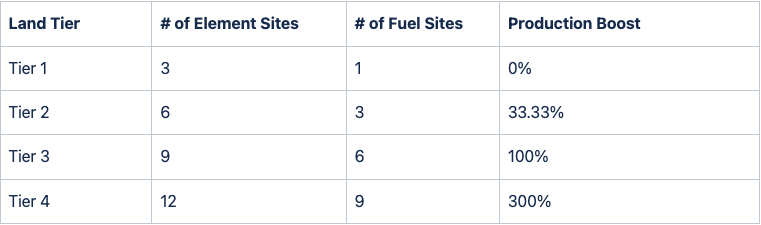

In Illuvium: Zero, the game attached to the Illuvium Land Plots, particular areas called Sites generate resources in the game. Some of these resources correspond to ERC-20 tokens called Fuel and hold value as the primary resources used in the Illuvium universe. A plot with more Sites is more valuable than a plot with fewer Sites.

We wanted to ensure customers knew what they were buying but also to have some level of differentiation between each plot. This was important for both the economy and the game itself (the game is much less fun if everyone's plots are identical).

To address this, we divided Land Plots into Tiers. Each Tier has a guaranteed number of Sites; higher-level Tiers have better production efficiency and more Sites than their lower-level counterparts. This information was released before the sale and confirmed in an Illuvium Improvement Proposal (IIP) voted on by our council:

Note: for the sake of brevity, some details such as Landmarks (the special case of the Tier 5 plots), and the role of different Site types, have been left out of this discussion.

Before purchasing, customers know the plot location, Region, and Tier (from which players can infer the number of Sites). The randomness comes into play in the make-up of the sites, specifically which Sites are generated and where are they positioned in the plot. For example, consider these two Tier 1 plots:

(a) Tier 1 Plot located at Brightland Steppes (514,445)

(a) Tier 1 Plot located at Brightland Steppes (514,445) (b) Tier 1 Plot located at Brightland Steppes (473,454)

(b) Tier 1 Plot located at Brightland Steppes (473,454)

Although both Plots are Tier 1 plots located in the same Region (Brightland Steppes), they have a very different make-up. The first plot (a) is rich in Carbon, as indicated by the three black diamonds on the map, whereas the second plot (b) has a mix of different resources.

Detailed Architecture

This generation of site data is essential to our discussion because it required us to generate metadata in our backend after tokens were sold. Such metadata included, for example, the plot images (see above) used in the IlluviDex and IMX marketplace.

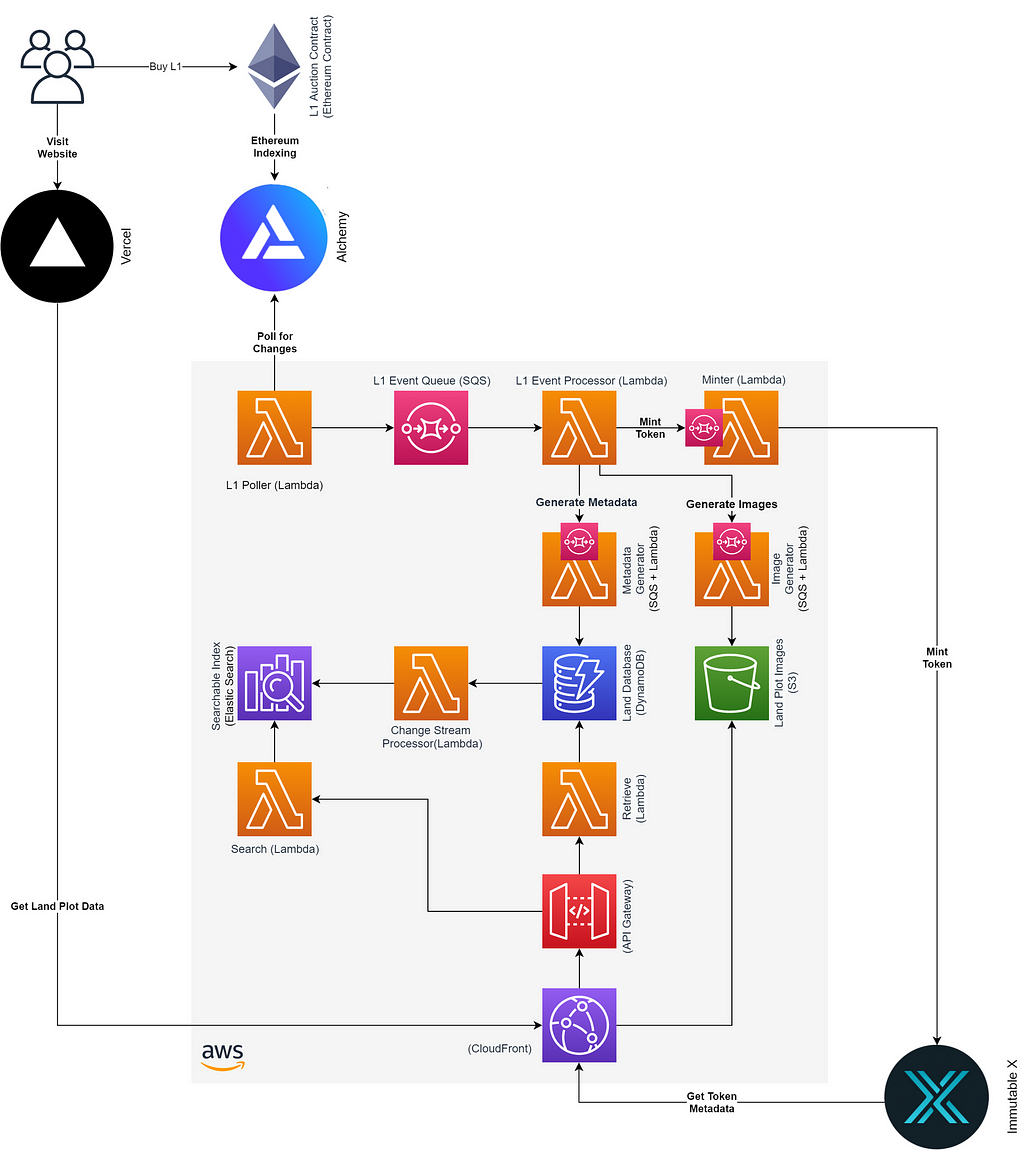

Taking into our account our architectural principles, the need to generate data post-sale and the core process of IMX minting our final design was as follows:

Land Sale Architecture — Detailed View

Land Sale Architecture — Detailed View

We'll describe specific aspects of this design in detail below, but some general design principles are worth noting. The first is simply that this is a serverless solution, all the logic (excluding front-end logic) is in Lambda, and the core data store is in DynamoDB.

The second noteworthy point is that we use SQS in front of almost all transactional Lambdas. We were able to design the system such that dependencies between functions were near zero, and a failure at one point did not mean that we needed to halt the process elsewhere. Rather than a set of steps that must be executed in order, our system uses a fan-out from the L1 Event Processor.

To increase resiliency, all of these queues also have automatic retries in place, with the final landing place of a failing message being a dead letter queue (DLQ).

Of course, such designs are not always possible, and mechanisms like AWS Step Functions have their place. Still, where available, this design pattern provides extraordinary resilience and control. We can throttle individual processing steps, retry failed queries in seconds with a DLQ redrive or even regenerate every image for every blockchain event without impacting other processing.

The Contract

Although this article focuses on the backend systems, no conversation about our approach is complete without describing some aspects of the sale contract.

The Dutch Auction itself, based on the idea of selling tokens in batches which we call sequences, and a decaying price function that returns the price for a given tier, sequence and timestamp, is interesting but not relevant to the technical aspects of gas savings (as they read this, I'm sure our blockchain devs protest having tweaked and optimised the algorithm to wring from it every last shred of gas savings).

Storing Pre-mint Data

Eclipsing these optimisations was a line of exploration that started with a simple question about where to save the pre-mint plot data; where do we store the list of tokens, their locations, tiers, sequence IDs, and so on? Without such information, the sale can't function, but storing 20,000 complex records in the contract was prohibitively expensive.

One idea was to store these in IPFS. Although this had some merit, it was still relatively complex and expensive (e.g. obviously, we couldn't read the entire 20,000 records on every contact interaction, so at the very least, an indexing scheme would be required).

The idea we settled on was interesting. We chose not to store the data in the contract at all and instead store it in our backend and let the buyer pass it into the contract via our website! This might raise some eyebrows; if the buyer is passing in the data, how do we ensure they are passing in the correct data. The solution lies with Merkle trees. We construct a Merkle tree of the pre-mint land sale data, and the backend stores, along with the pre-mint data, the Merkle proof for each node (plot). The contract need only store the Merkle root and validate that the proof is valid and that the input data matches the proof.

Although this technique is likely familiar to blockchain developers, for others looking to find out more, you can start at the Wikipedia entry: https://en.wikipedia.org/wiki/Merkle_tree.

Storing Post-purchase Data

After purchase, we also needed to generate and store the Site data (the positions and Site types used in the game and our sample plot images from above). We had concerns over the amount of data being stored (at higher tiers, there can be more than 20 data items), particularly for those buyers who wanted to take their tokens to L1. Our solution here was aligned with the pre-mint storage solution. We don't store this data!

We are big fans of deterministic algorithms; they drive our Illuvium Arena game, and we used a similar approach in the contracts. Rather than store this data, we simply provide a view function that, given a seed value stored on-chain, can generate the Site data deterministically.

The seed value is pseudo-random, derived from the block where the purchase event is confirmed, but in this context, there is no concern with manipulation. Not only is the value hard to manipulate in a meaningful way (the view function is chaotic, and you have limited control over the assigned block number due to the Dutch Auction format), but there is also little to no value in manipulating the seed. There is not enough information about the game to make definitive statements about one plot make-up (within a given Tier) being more valuable than another.

We use the same view function in the backend to generate and store metadata and plot images for the IMX marketplace. Importantly, anyone can verify that this backend data is aligned with the blockchain data by running the view function from the contract.

The IlluviDex

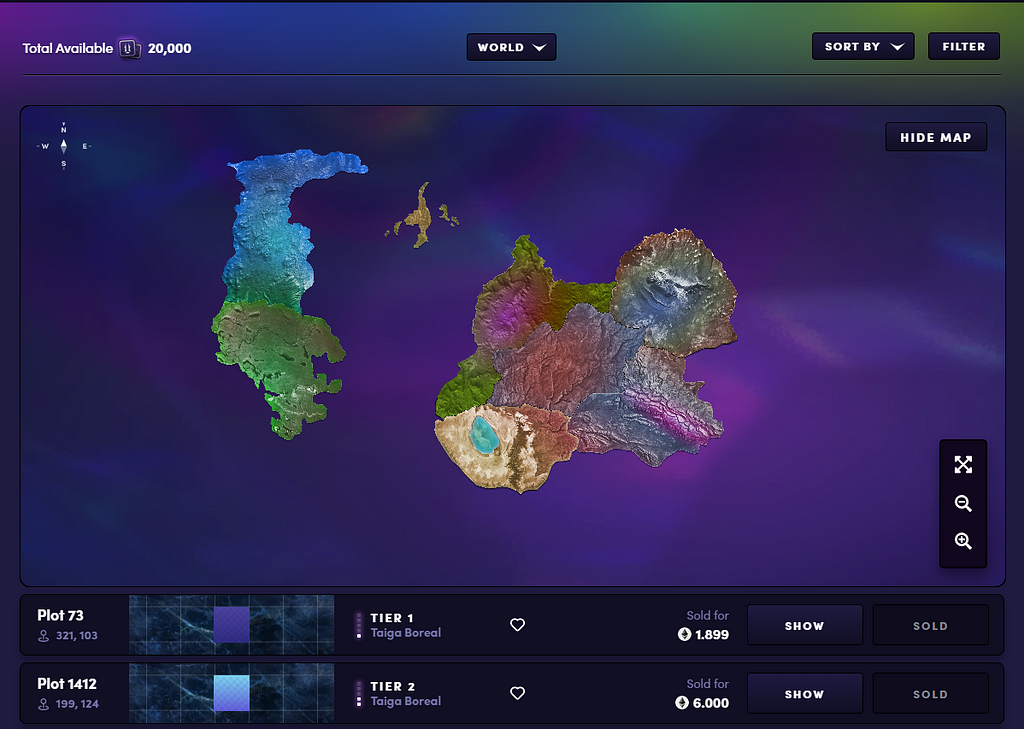

As mentioned above, the focus of this article is the backend systems. Yet no conversation about our approach is complete without describing some aspects of the IlluviDex, the web application that hosted the Land Sale. Illuvium strives to make beautiful products, and the IlluviDex is no exception. Let's first stop for a moment to admire this beauty:

An overview of the Illuvium World as seen in the Illuvidex

An overview of the Illuvium World as seen in the Illuvidex Zooming in to a specific area of the map and viewing the details of a sold plot

Zooming in to a specific area of the map and viewing the details of a sold plot

The role of the Illuvidex is at once both simple and complex, easy to explain but hard to get right. Firstly it needed to allow users to find the lands they want to buy, which it does through a myriad of filter opens, a favourite system, and the beautifully rendered and searchable map. Secondly, it needs to integrate with the backend and the contract, tying the data together to make the purchasing process as straightforward as possible. Finally, it needs to be available continuously!

Instrumental to this availability was our web hosting and Next.js provider Vercel. Throughout the Land Sale, they provided rock-solid delivery of our web content, regardless of traffic volumes and spikes.

Polling L1

The L1 polling solution is relatively straightforward. We frequently requested Alchemy's Ethereum API to look for new confirmed buy events on our contract. The Alchemy APIs made this particularly simple, allowing us to filter by contract and event hash. New events are published to SQS for subsequent processing.

To keep things efficient, we maintained a pointer to the last successfully processed block in DynamoDB and only queried for events from the last processed block to the latest block (see below for some clarifications on this). Although the Alchemy API also provides options for pushing updates via webhooks or websockets, the polling approach made the most sense for us as only a relatively small number of events can occur within a given period (178 new land plots went on sale each hour, each sequence lasting only 2 hours).

The polling approach also gave us a lot of flexibility; for example, if we wanted to re-process all transactions, we simply updated the lastProcessedBlock pointer to point to the past.

Handling Re-orgs

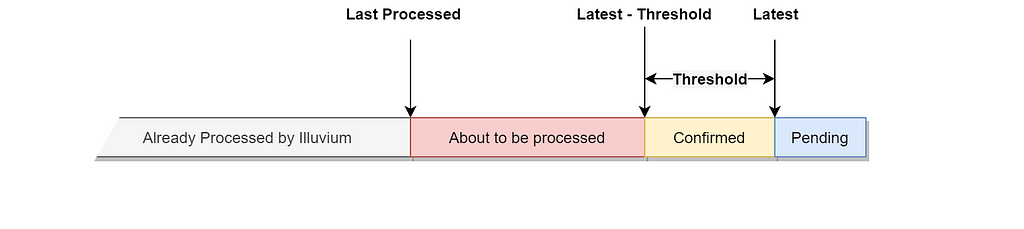

Because we minted outside of L1 (on IMX), we were quite concerned with the ramifications of chain re-organisation (re-orgs). A re-org could lead to a situation where we minted a token on L2, before the re-org, which then becomes invalid after the re-org (because another user ended up 'winning' the plot in the re-organised chain). To combat this, instead of using the Latest confirmed block, we waited for several block confirmations before considering a buy event finalised. Implementation-wise, we simply limited our Alchemy event queries to look only as far as the block number Latest - Threshold.

Block Polling

Block Polling

We used a threshold value of 6although we did see two re-orgs that went beyond 6 block confirmations during the sale. These did not impact our transactions, but if you are looking to use an approach similar to ours, we would advise you to think carefully about this value and consider going with a higher number of confirmations, such as 10.

Pending and pre-threshold Transactions

One issue caused by this 6-block delay was that it meant that there were approximately 5 minutes (depending on network conditions) where a plot sale was "in progress" but not yet minted to L2. This meant browsers that hadn't refreshed could submit transactions for the same token, which would inevitably fail, losing the customer gas in the process.

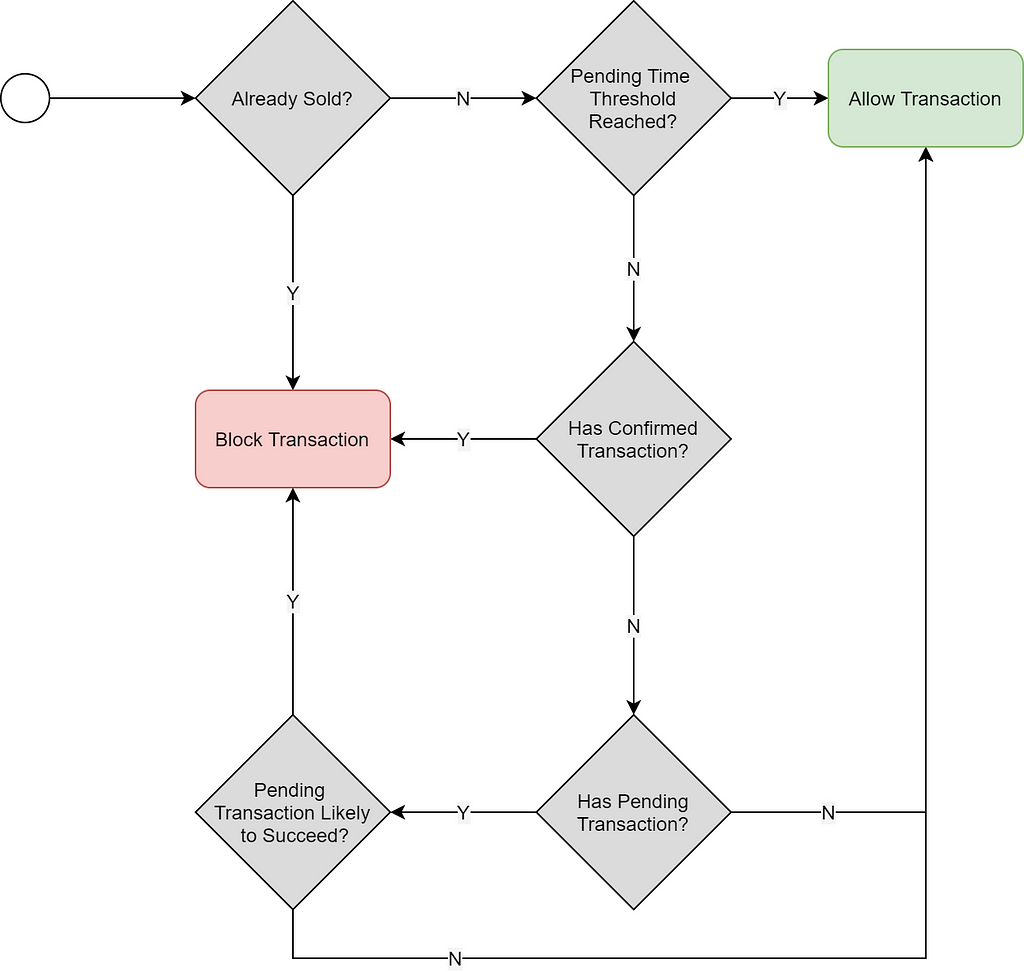

We introduced a Pending Transaction function (not shown in the architecture diagram) to combat this. When a customer attempted to buy on the website, we first checked the Pending Transaction function. In this function, we used the Alchemy APIs that let us query the mempool. If we found a confirmed or pending transaction (likely to succeed based on allocated gas), we would block the customer's purchase and show them a warning that a transaction was in progress.

This, in turn, led to a potential risk that a well-prepared attacker could constantly keep land in a pending state by raising and cancelling transactions. To avoid this, we had a simple rule; allow a land plot to be pending only for a limited time (the pending time). After this period, the pending transaction function would not allow the land to be made pending until another grace period had passed.

Pending Transaction Logic

Pending Transaction Logic

Minting to L2

Once we had confirmed events, the minting process was simply a matter of creating and signing the appropriate IMX requests. Given we use C# on the backend, we opted to write our own IMX integration code, working directly with the APIs rather than using their convenient JS libraries. This meant additional work to ensure payloads and request signing was correct but was considered an approach most aligned with our architecture.

After a token is minted, it is available for viewing on our own Illuvidex and the IMX marketplace immediately. IMX calls our metadata APIs to enrich the token with custom metadata, including the plot images. This metadata is generally populated 5–10 seconds after minting.

Observations

I will leave you with some observations. Firstly, our uptime across the Land Sale for all systems was 100%. There were no outages anywhere.

Secondly, there were only a few hundred errors across tens of millions of invocations:

Additionally, the system automatically resolved all but 5 of these errors by retrying requests.

The 5 errors that remained were external requests that timed out multiple times. These were resolved simply by running a re-drive for the corresponding Deal Letter Queue. These simple 3-click re-drive operations were the only 2 manual actions taken during the entire sale.

I think it's clear, from a technical perspective, that this was an overwhelming success. Allow me to express my thanks to all of our technology team, the wider Illuvium team, and the great partners that made this possible.

![]()

0 Comments

Recommended Comments

There are no comments to display.